Munin, awk, nfs, funkytown and other madness

Posted on 2010-09-06

I have long since come to terms with my not quite normal degree of geekyness. This time around, I'll tell you about Munin. Actually, scratch that, I'll tell you about how I use Munin. If you want to read about good, sane, best practice-like Munin-usage, you should close this page immediately.

Prelude - what is Munin

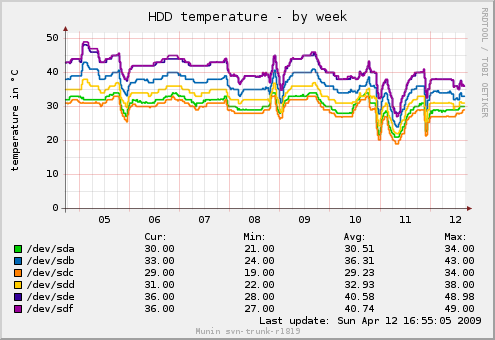

Munin is a monitoring tool for computers. You have a "master" running every 5 minute that connects to a number of "nodes" which reports various types of statistics. This will be statistics like "how full is my disk" and CPU statistics. Or "How hot are my disks":

So to sum up, it's split in two: A master, which consists of "munin-update" that fetches data and populates a database and "munin-graph" that reads said database and draws pretty graphs. There are a couple of more factors involved, but let's keep it simple.

And you have a node, which consists of a daemon listening on a TCP port (typically 4949) and executes plugins when the master asks for data. Traditionally, one graph is one plugin. Which in turn is one process, executed every five minutes.

The first task - load

My "server", kit, is noisy. If I burn the cpu, it spins fans that are quite noisy. I don't really want to listen to that. My workstation (luke), on the other hand, doesn't really notice if I run graphing. However, my workstation isn't really guaranteed to be on or available. Ill-suited for collecting and storing data.

So I shared the RRD database over NFS, set up a copy of the config on my workstation with paths modified, modified both cron scripts and voila - now kit collects the data and luke graphs it.

Of course kit runs varnish, so the munin graphs are cached. And so does kreia - the firewall - so if you view my Munin graphs from the internet, you're going through two different Varnish caches.

This setup is rather ... confusing. Specially when you add the fact that luke and kit run different versions of Munin at the moment. Oh well.

Funkytown

Funkytown is a category I've invented for munin plugins/graphs. Simply put, it's a collection of ridiculous plugins. Here you'll find the meta-plugin "munin_plugins", which counts how many munin plugins I have active.

And a plugin counting how many terminals I have open.

And a plugin to see which commands I'm most fond of.

I also used to have a couple of other weird ones.

In addition to funkytown plugins, I've written a handful plugins (see http://github.com/KristianLyng/munin-plugins (http://github.com/KristianLyng/munin-plugins)). The more useful ones measure resync progress and speed of software raids under Linux, progress of background media scans on my disks and temperature on disks fetched through sg_logs instead of smartctl. (Edit: Almost forgot the varnish_ plugin I've written, which is rather good and part of the Munin package)

munin-node-awk

Ok, all that wasn't really worth a blog post. But this is.

Tonight I re-implemented munin-node in awk.

For those who don't know awk, it's that thing you use instead of cut in "./foo bar | awk '{print $4 }'". It's actually a real programming language. Sorta.

munin-node-awk uses Gawk's TCP/IP support to implement a TCP/IP server and so far supports the CPU and Load Average graph. All without spawning any extra processes.

Take a look at: http://github.com/KristianLyng/munin-node-awk (http://github.com/KristianLyng/munin-node-awk)

Or (assuming my IP doesn't change - sigh) demo: http://cm-84.209.119.36.getinternet.no/munin/awk/luke.awk/index.html (http://cm-84.209.119.36.getinternet.no/munin/awk/luke.awk/index.html)

There are a few reasons why I did it:

- It's HILARIOUS!

- I've been doing stress testing of Varnish, where load and responsiveness can easily be horrible. Having munin-node fire off a few hundred forks every five minutes is unacceptable, yet I need statistics. munin-node-awk allows me to get some basic statistics right now and prototype the idea of a single-process and persistent statistical node.

It's important to note that right now, this is an experiment and a prototype. Which is just an other way of saying it's still fun. I will be writing a varnish plugin soon and probably a vmstat plugin, then see how it pans out. I might also modify munin to do 30 second or 1 minute updates instead of 5 minute updates. We'll see.

Re-using the munin protocol was an easy way to test the concept without re-implementing the actual graphing and data storage.

Using gawk is actually very logical, even if I chose gawk mostly because it is hilarious to work with. First of all, awk is fast. It supports TCP/IP, albeit not too well. It is also very good at parsing data, which is exactly what most Munin plugins do. In fact, you will discover a lot of awk if you look through existing Munin plugins. The CPU graph/plugin for munin-node-awk, for example, was just de-bashified from the cpu graph in munin that uses awk already.

Some cool things:

- I can cache things like "number of CPUs".

- No more double-forking because of "config" versus "fetch".

- Share common data (ie: cpus, RAM, disk space...).

- ?????

- PROFIT!

Err, what do I call a "plugin" now that it's not actually plugged in any more?